In 2019 I bought a Tesla Model 3, and when I bought it I needed to make a decision on whether to buy it with Full Self-Driving capability. The technology wasn’t available yet, but by buying the capability in advance, I was promised I was getting a far better deal on it. FSD, as it’s called, cost $5,000 at that time on top of the price of the car. They gave no promise of when the full capability could be enabled.

Steve and I wanted to get an electric vehicle for environmental reasons, but we were also really interested in autonomous driving. Based on humanity’s historically bad record of paying attention while driving, I really look forward to the day when humans aren’t allowed to drive motor vehicles. We both believe in the self-driving future. That’s why I coughed up the extra $5K.

Last year, Steve bought a Tesla Model Y, and by 2020 the price to add non-enabled future Full Self-Driving had gone up to $7,000. For the same reason as before, Steve paid for FSD.

It’s now late 2021, and if you buy a Tesla and want Full Self-Driving, it will cost you $10,000 to add the capability. On the one hand, this makes me look like a smart shopper because I got a functionality I can’t use for only half the price! Tesla also has a subscription FSD program where you’re able to rent the capability month-to-month if the hardware in your Tesla supports FSD (all newer Teslas do).

The additional features that are currently available for those who pay for Full Self-Driving in the US include Auto Lane Change, Autopark, Summon, Smart Summon, and Navigate on Autopilot. Even without Full Self-Driving, Model S, X, 3, and Y Teslas support Traffic-Aware Cruise Control and Autosteer.

Navigate on Autopilot is pretty cool and works fairly well. It’s designed for freeway/highway use. If you select a destination in your Tesla and engage Navigate on Autopilot, your car will drive you from the freeway onramp to the offramp closest to your destination. Once you get on the freeway, the car will automatically steer to maintain your lane and drive you to your destination offramp, change lanes to pass slower cars, maintain a safe distance to the car in front of you, and even change freeways when required.

Of course, driver attentiveness is required while in any self-driving mode. The driver must be sufficiently alert to take over the drive if the autopilot behaves incorrectly. To help enforce this, the car will sense if you have not been holding or applying slight pressure to the steering wheel on a regular basis and will disengage the autopilot after a couple of warnings if it senses this condition repeatedly.

FSD Beta Safety Score

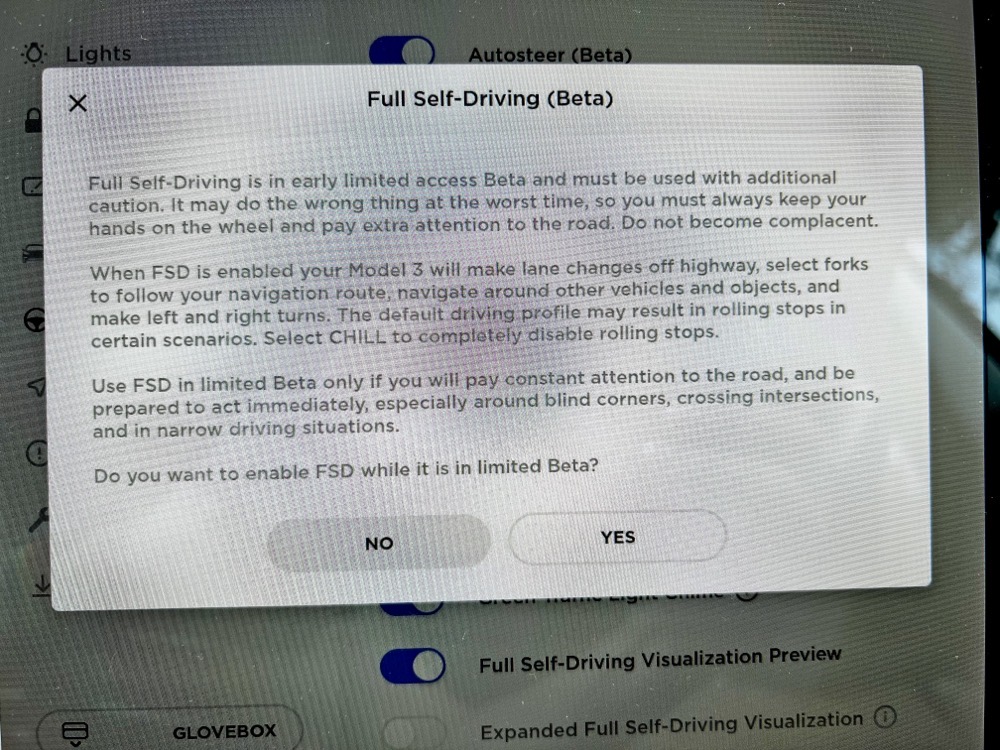

The reason I told you this entire back story is that Tesla has begun rolling out the beta of Full Self-Driving which provides additional self-driving features. You can think of FSD Beta as extending Navigate on Autopilot features from freeways to city streets.

This is clearly much more challenging since the car now needs to automatically recognize and navigate with traffic lights, stop signs, pedestrians, bicycles, unmarked roads, and other challenges not encountered on freeways.

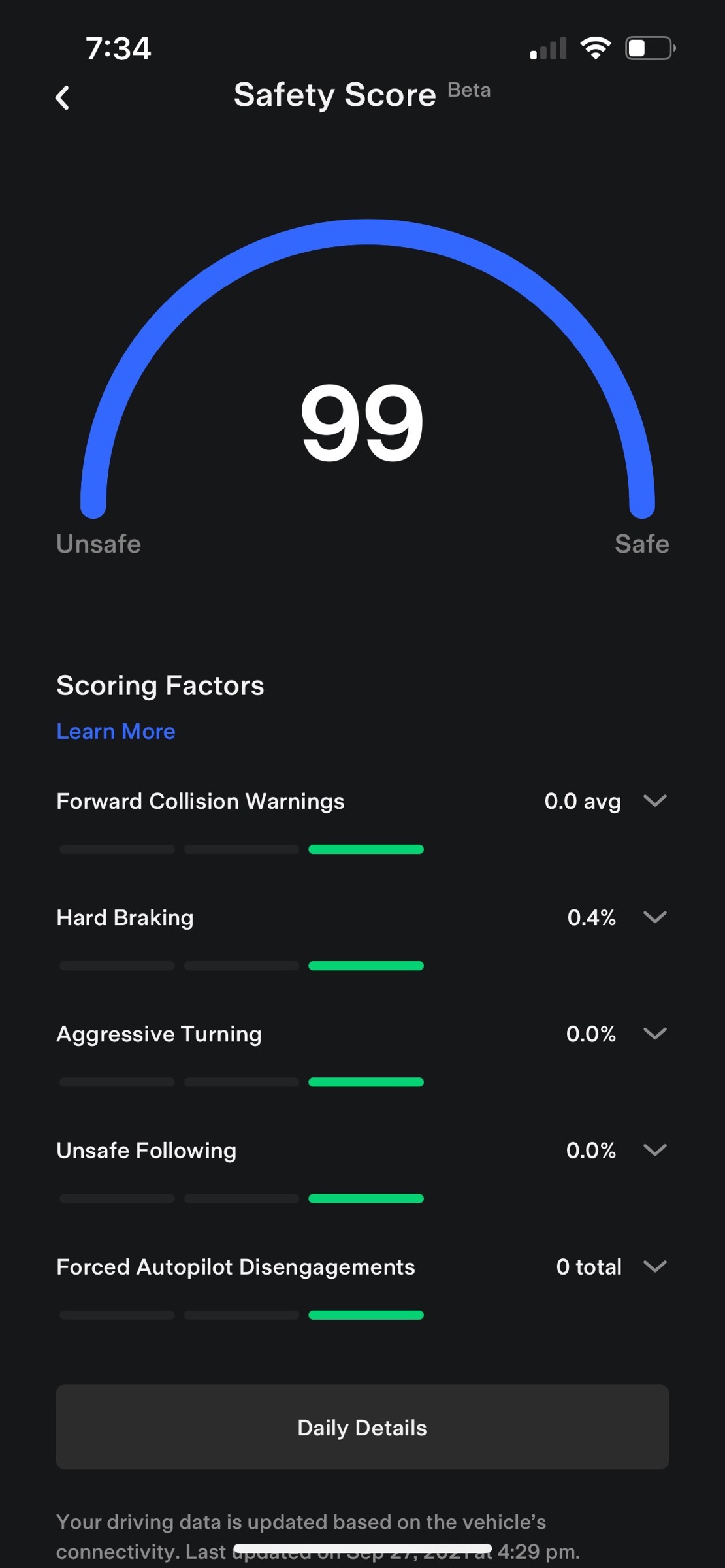

Steve was super excited about this and studied up on how to get in on the program. In order to qualify for the beta, you have to enable a monitoring app on your car that will track and score your driving over a rolling 30 day period. The Tesla mobile app shows you an aggregate Safety Score from 0 to 100, and also shows you a trip-by-trip score. The Safety Score is measured on 5 driving criteria:

- Forward Collision Warnings

- Hard Braking

- Aggressive Turning

- Unsafe Following

- Forced Autopilot Disengagements

I’d like you to think about what’s missing on this list of safety criteria. Where’s speeding? Where’s aggressive acceleration? Where’s cutting off a pedestrian? Where’s constantly swerving lane to lane to pass people? Where’s running a red light? None of those things makes a difference in your Safety Score.

Steve enabled the beta program on both of our cars and we tried to drive as carefully as we could in hopes of getting 100 points on our Safety Score. The FSD Beta software was going to roll out to the people with Scores of 100 first, then in a few weeks the 99’s, then the 98’s, and so on.

Before I tell you the rest of the story, I want you to know a couple of things. I am not the world’s best driver. I try to be but I don’t succeed. I must be a terrible driver if I can admit this because, according to a psychological study at New York University, 88% of Americans believe they’re better than average drivers. Think about that for a moment…

While I’m not a great driver, I don’t drive aggressively. I don’t tailgate, I don’t swerve around slower cars, I don’t slam on the brakes. I do love to accelerate quickly in my Model 3, and in fact, that’s my favorite thing about the car.

Steve, on the other hand, is a very good driver. I have been in the car when someone else makes a mistake and he instinctively makes the exact right move to avoid what looked like an inevitable accident. However, I would call him a mildly aggressive driver.

We started with the Safety Score monitoring, and within a few days, I was at 99 and he was at 96. You know I’m far too good of a person to rub his nose into that, right? Heck no, I was all over that. But then I got a single collision avoidance warning and my score dropped to a 79! Steve had every right to mock me for it but instead, he chose the high road and was sympathetic.

As I’ve said, I’m realistic about my driving, but I think Tesla’s collision avoidance warning isn’t working properly. I know, this sounds like justification for my bad driving, but hear me out.

Let’s say you’re driving on a straight road, and someone turns left in front of you to go into a side street. As a human, you can see their trajectory based on their current speed. They might suddenly stop in the middle of the turn, but given enough distance to stop if they do, you would proceed at your normal speed and pass well after they complete their turn.

Tesla’s collision avoidance warning loses its ever-loving mind when that happens. You can be 200 feet from the intersection and still get the warning.

We live off of a street with some very deep drainage dips at each intersection. (Remember these, because they come up in the plot again later.) As you drive down this street, each driver (if they’re paying attention) will slow down enough to go through the dip to avoid bottoming out their car, and then immediately speed up as they come out of the dip. I was driving along with plenty of room behind the car in front of me when they slowed down to take the dip. I got a collision avoidance warning for that. I guarantee you there was a 0% chance I was going to hit the person in front of me.

I also got a few aggressive turn warnings, but those were on me. The center of gravity of my car with its low profile and a giant, heavy battery underneath me makes it so much fun to take turns really quickly!

As it turns out, collision avoidance warnings do the most possible damage to your safety score. The safety score is also calculated based on incidents per 100 miles driven. Getting two collision avoidance warnings made getting my total score back up high with how few miles I drive, was like trying to get your GPA up in your senior year after fooling around in your freshman year.

Here’s the sad news. I successfully got to 99 before Steve. To be honest, I didn’t want to turn on FSD beta first, I wanted him to go first. He was so excited though that we enabled it on my car. As of the time I wrote this up, the current version of FSD Beta was 10.4. Tesla software versions are continually updated with bug fixes and enhancements, and right now 10.5 is on the horizon.

And now, after all that, I’ll tell you what I think of Tesla’s Full Self-Driving Beta.

Real-World Tests of FSD Beta

It’s terrible. It’s like a student driver … who is also drunk. I think calling it an alpha would be an insult to alpha software everywhere.

Let me elaborate by describing the notes we took on our very first test. We decided to drive 3.6 miles to our friend’s house with me at the wheel, and then the 3.6 miles back with Steve at the wheel. I will say that I’m really happy Steve was with me for the first test because I really don’t think he would have believed me if he hadn’t witnessed it himself.

I backed out of my driveway and pulled to the curb. There were a couple of trashcans a ways ahead of me next to the curb. I enabled Full Self-Driving with two downward strokes of the stalk on the steering column. The car drove straight at the trashcans and I had to slam on the brakes. I had driven 10 feet so far.

I enabled FSD again and let it drive to the first intersection where the light was red. It correctly turned on the right turn signal, but it didn’t pull to the right as it should have, and it didn’t make the turn against the red on its own. I’ve since learned that it will, eventually, turn right on a red light but it waits a very long time.

When the light turned green, the car made a successful right turn. It picked up speed till it was going 35mph which was the speed limit. I was on the road I described earlier with the very steep dips. It barreled right ahead at 35mph to one of these dips. I was forced to hit the brakes to avoid inevitable damage to my car.

I came up to another intersection where it correctly navigated into the left turn lane and put on the signal. This was a tricky intersection because it had a green arrow, but FSD Beta correctly recognized it and made the left turn. However, it made this turn in many small, jerky adjustments rather than a smooth curve.

But its bigger error was that as it made the turn, it headed right towards the curb of the median separating the oncoming lanes. I had to wrest control and turn properly into the lane. This particular error happened consistently throughout our first test drive.

As we were now toodling along at 40mph on a main road, the car suddenly slowed way down with no one in front of me. Great. Then it stopped at a green light. I could see no reason why it had trouble misinterpreting this particular light when it clearly understood the much more subtle left-turn arrow on an otherwise red light.

Later as we continued on our trip, a driver cut me off, pulling very closely in front of me coming in from the right. FSD did not react at all, forcing me to hit the brakes to avoid a collision. After all the collision avoidance warnings it gave me, this mistake on its part really annoyed me.

On our drive, we encountered a T-intersection without any traffic control. The car inched forward and onscreen it said, “Autopilot creeping forward to check for visibility”. We thought that was pretty great.

At this point, we had driven an entire 3.6 miles. We stopped the car, waited for our heart rates to drop back down, and wiped our sweaty palms. I then switched places with Steve now behind the wheel.

It had new entertainment in store for Steve. For some reason, it started to automatically lower the speed of the vehicle to well below the speed limit. At one point it changed down to 22mph when we were in a 35mph zone. We couldn’t find any reason it was doing this and I haven’t experienced that again since.

We had a couple of left-turn pocket maneuvers, and it didn’t consistently make the turn when it was allowed to do so. What it did consistently do was slow way down just as it got into the left turn pocket. This is about the quickest way you can aggravate another human in Los Angeles. We had to keep hitting the accelerator when we went into left-turn pockets.

On each and every left turn, it also consistently tried to run over the curb of the median. Thinking maybe we were just nervous nellies and it wouldn’t have actually hit it but just gotten close, Steve let it go on its own at one intersection because the median was painted, and not a physical curb. Nope, the car drove probably a good foot over the double yellow line as it made the turn.

As we neared home, we were on a three-lane road where the right lane merges in shortly before a big intersection. This merge is clearly painted on the road. The car changed lanes from the center lane to the right lane just as the merge was starting. Knowing this was foolish, Steve pulled the car back to the left.

Now we were in a very wide lane as the two lanes narrowed down, so the car decided the center of that massive lane was the right place to be when a human driver would have known to hug the left side. We’ve seen it do this on merging lanes on the freeway too, so it’s definitely not getting the hang of it yet.

At this point, our 7.2-mile trip had ended and we were both emotionally exhausted.

Feedback

Feedback is important in any beta testing. Tesla has a couple of methods for this. They explicitly tell you to tap the video icon on the top of the screen that was added with the FSD beta software. Now think about this. I’ve got a drunk student driver in control of the car. Do you seriously want me to take my eyes off the road long enough to find a 1.5cm-wide icon on the screen and tap it so you can grab the video evidence of how the car messed up?

The other option, which is available on all Teslas no matter the software, is to use the right scroll button on the steering wheel. This button, when clicked in, engages voice commands. One thing you can do is say “Bug report” and as quickly as you possibly can, describe what went wrong. I am serious about saying it quickly, you have maybe 6 seconds to blurt it out. I’m not sure that’s as good as capturing the video, so if I safely can, I will try to tap that little video icon, but not if it endangers my life or the lives of others.

Steve has continued to chase and keep the elusive 99 score but he keeps getting kicked back to 98. Recently we were on a long drive and I can testify that he was at least 7 or 8 car lengths behind the car in front of us and the car gave off a collision avoidance warning. It was tragic because he had gotten up to 99 but he needed to hold it there, and because of the stupidity of the algorithm he lost his 99 yet again.

Steve has said that he’s driving much more cautiously than he used to and he’s noticed that he’s less anxious in some ways because of it. On the other hand, he’s anxious because he wants that score to go up so it’s been a very double-edged sword for him. The 98’s are getting into the beta soon with the release of FSD Beta v 10.5, so hopefully, the car won’t make any dumb judgments on him till then, and he’ll be able to enjoy the fun of having a drunk student driver in charge of a 5000lb vehicle.

We do have some unanswered questions about the beta program. One is whether our individual vehicles are actually learning. Do we have to let each car student drive frequently so that it gets the hang of it? I assumed, perhaps erroneously, that the algorithms were all coming down from the cloud and the collective was learning, but based on how bad it is, we’re wondering if that might be incorrect.

Also, our cars are equipped with a radar which is used to estimate the distance to vehicles and other objects ahead of the car, while newer Teslas only have optical sensors. FSD Beta software eliminates the use of the radar and only uses the optical sensors. Tesla calls the use of optical sensors only and enhanced AI algorithms for self-driving “Tesla Vision”. We’re wondering if the removal of the radar is an explanation of how much worse the experience has been than we expected it to be.

Bottom Line

The bottom line is that we are quite surprised at how poor Full Self-Driving Beta has been for my car. We’re still bullish on self-driving as a long-term future goal, but we’re sad that it feels much further away in time than we expected. I look forward to Steve’s experience with FSD Beta on his Model Y and hope that maybe it works better for him. For now, I only rarely let my car drive itself because it’s just too darn nerve-wracking.